I’ve been using AI tools in my daily workflow for a couple of years now. ChatGPT, Midjourney, Copilot, whatever came out.

I was an early adopter, not out of FOMO but because I work in product and design, and if there’s a tool that can remove friction from the creative process, I’ll try it.

But Claude is different. And I need to explain why.

The first thing I noticed: this model thinks differently.

When you open Claude for the first time, you expect more of the same. Another chatbot wrapping answers in bubble wrap. What you actually get is something else: responses that feel like they were written by someone who read the same books you did, who gets context, who doesn’t pad the answer.

For someone who works in product design and business strategy, that matters a lot. Because most of the time I don’t need an AI to generate text, I need it to help me think. And Claude, for reasons I can’t fully articulate, does that better.

I don’t know if it’s Anthropic’s training approach, their constitutional AI philosophy, or just better internal prompt engineers. But the difference is real.

Claude as a product tool: what actually works

I’ll be specific because vague opinion pieces bore me.

User research synthesis and discovery. I have long conversations with Claude where I paste interview notes, qualitative findings, raw data, and ask it to find patterns. The output isn’t perfect, but it saves me hours of affinity mapping I used to do by hand. Is it a substitute for thinking? No. Is it an accelerator? Absolutely.

Writing PRDs and technical documentation. This is where I get the most ROI. Not because Claude writes the docs for me, but because it helps me structure, spots gaps in my reasoning, plays devil’s advocate. I tell it “critique this requirement” and it gives me three angles I hadn’t considered. A junior PM would do the same thing, but I need it available at 11pm.

Feature brainstorming. More reservations here, but it works for unblocking sessions when you’ve been staring at a blank canvas for twenty minutes.

The real problem: what nobody tells you

Here’s the uncomfortable part.

Claude is very good. Too good at some things. And that creates a dependency that isn’t innocent.

I’ve noticed in myself, and in teams I work with, that the muscle of thinking without assistance atrophies. Before Claude, when I had a hard design problem, I sat with it. Let it simmer. Went for a walk, came back, tried a different angle. Now the first reaction is to open a new conversation. And that worries me.

Not because AI is bad. But because the speed it gives you comes at the cost of a deeper thinking process where, often, the best ideas live.

Traditional design thinking has intentional friction. The Google Design Sprint isn’t poorly designed, the pace it forces comes with a tradeoff: you sacrifice idea maturity. Claude accelerates that process even further. Which can be great for startups in survival mode, but dangerous for products that need depth.

And for business? An honest breakdown

Three profiles, because the answer is very different depending on who you are:

Freelance / consultant. Claude is a brutal force multiplier. If you work solo and bill by project, the productivity it gives you translates directly into margin. You ship more in less time without hiring. For this profile, it’s probably the best software investment you can make right now.

Early-stage startup. Works really well for fast iteration: copy, documentation, analysis, idea exploration. The risk is using Claude to appear more advanced than you actually are, both internally and in front of investors. AI can make a team of four look like twelve, but team size can’t replace certain things: friction, debate, shared learning.

Mid-to-large company. The most interesting use case and the least exploited. Most companies claiming to “implement AI” are using GPT to write newsletters. Claude has real potential in scaling customer feedback analysis, automating content design pipelines, supporting strategic decision-making. But it requires integration investment and cultural change, and that’s where most organizations stall.

Anthropic vs OpenAI: the battle that matters more than you think

I don’t want to do a feature comparison listicle because there are already a thousand of those. But I do want to say something about company philosophy.

Anthropic was founded on the premise that AI can be dangerous and that it’s worth building anyway, but carefully. That’s high-stakes AI with responsibility baked in. OpenAI started in a similar place and pivoted toward the commercial race. I’m not saying one is good and the other bad, I’m saying that the design decisions inside a model reflect the values of whoever built it.

When I use Claude and notice it refuses certain things, or hedges more than ChatGPT, or doesn’t give me what I want on the first try, part of that is intentional design. It creates friction for me as a user because I want the answer fast. But as someone who thinks about product and consequences, I respect it.

What I’d like to see

If anyone at Anthropic reads this, which they probably won’t, but just in case:

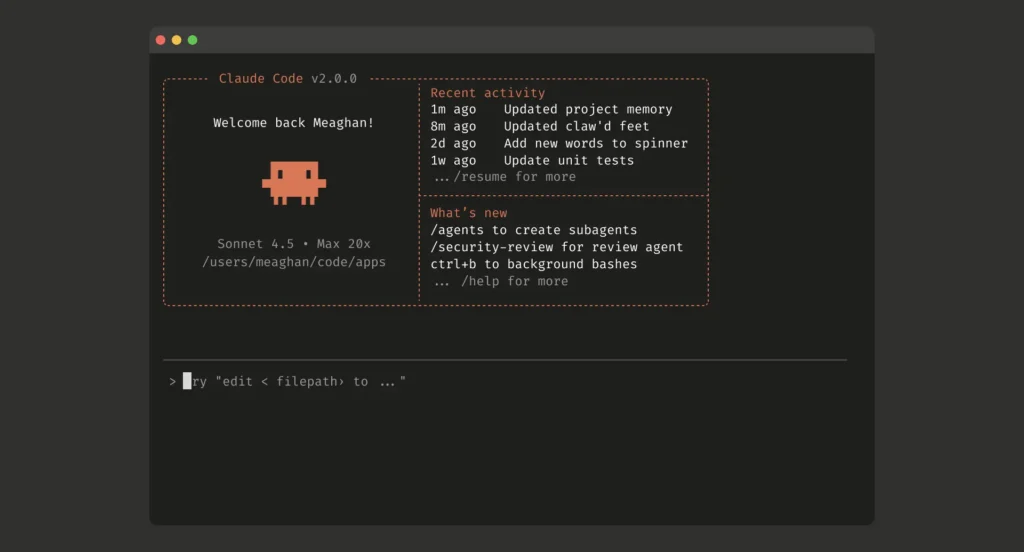

Better integration into design workflows. Claude inside Figma, Notion, Linear, not as a filler plugin but as a contextual intelligence layer that understands what you’re building. This exists in fragments, but the level of integration I need to make it truly transformative isn’t there yet.

More robust and controllable memory. Context handling on long projects is good but not enough. I need Claude to remember who my user is, what the product strategy is, what we’ve decided and why, without me having to repeat it every week.

Transparency in reasoning. When Claude gives me a strategic recommendation, I want to know why. Not a generic why, but the actual decision tree. This exists partially in extended reasoning models, but it’s still clunky.

Conclusion: AI won’t take your job, but it will change it

I’ve heard that phrase a thousand times and it feels both true and insufficient.

Claude isn’t going to replace me as a designer or as someone who thinks about product strategy. But it is changing which skills actually matter. Before, knowing how to do things was the point. Now, knowing what to ask something that knows how to do things is the point.

And that’s a deep cultural shift I don’t think we’ve really started to process.

AI is an ally. But like all allies, it comes with its own agenda, its own limitations, and its own cost of entry. Using it well requires more judgment, not less. And judgment is not something you can delegate.